The guide I used for installing docker on Debian 13 Trixie

Here is the DNS fix I used to fix container that were having DNS issues since I moved to Debian 13.

Create or edit /etc/docker/daemon.json

{

"dns": ["8.8.8.8", "8.8.4.4"]

}

The services I have running

13FT Ladder

It pretends to be GoogleBot (Google’s web crawler) and gets the same content that google will get. Google gets the whole page so that the content of the article can be indexed properly and this takes advantage of that.

CyberChef

CyberChef is the Cyber Swiss Army Knife web app for encryption, encoding, compression and data analysis.

Dozzle

Simple Container Monitoring and Logging

FreshRSS

Open Source RSS

Apache Guacamole

a clientless remote desktop gateway. It supports standard protocols like VNC, RDP, and SSH.

Homarr

A sleek, modern dashboard that puts all of your apps and services

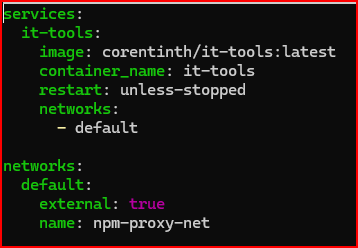

IT Tools

Collection of handy online tools for developers, with great UX as a web app.

Jellyfin

Jellyfin is the volunteer-built media solution that puts you in control of your media. Stream to any device from your own server, with no strings attached. Your media, your server, your way.

NetAlertX

Discover and visualize all your networks

Netbird

NetBird combines a WireGuard®-based overlay network with Zero Trust Network Access, providing a unified open source platform for reliable and secure connectivity

Netdata

Real Time Infrastructure Monitoring

Nginx Proxy Manager

Reverse Proxy

Open Speed Test

OpeedTest by OpenSpeedTest™ is a Free and Open-Source HTML5 Network Performance Estimation Tool

Open Web UI

Open WebUI is an extensible, self-hosted AI interface

Rust Desk

Open-Source Remote Access and Support Software

WUD (aka What’s up Docker?)

Let’s you know what docker containers need to be updated