I last did a Proxmox vs XCP-ng comparison back in 2022, and a lot has changed since then. Both platforms have matured and added features, and more importantly, they have clarified what problems they are trying to solve.

This updated comparison isn’t about declaring a winner. It’s about understanding different architectural approaches: how each platform handles management, storage, backups, and disaster recovery in real-world environments.

Proxmox VE runs on a full Debian base but relies on Proxmox-curated repositories for core platform components, including Ceph, which is integrated, version-pinned, and supported as part of the hyper-converged stack.

XCP-ng takes an appliance-style approach with a minimal host OS, updates controlled by Vates, and higher-level management centered around Xen Orchestra rather than the host itself.

My XCP-ng Setup Training Guide How to Set Up XCP-ng Right the First Time – Best Practices and Configuration Tips - Computer Hardware & Server Infrastructure Builds - Lawrence Systems Forums

Legend

![]() Supported / Native

Supported / Native

![]() Not supported

Not supported

![]() Supported with limitations, external components, or important caveats

Supported with limitations, external components, or important caveats

Proxmox VE vs XCP-ng — Core Platform Comparison

| Feature / Capability | Proxmox VE | XCP-ng |

|---|---|---|

| Hypervisor | KVM (QEMU) | Xen |

| Host OS | Debian-based | Custom minimal Linux |

| Free & Open Source | ||

| Paid Subscription | ||

| SLA Support Agreements | ||

| Management Model | Integrated per-cluster platform | XO Lite (beta) or XO central manager |

| Built-in Web UI | ||

| Datacenter Manager (multi-cluster) | ||

| API Coverage | ||

| CLI Management | ||

| Clustering | ||

| Native Ceph Integration | ||

| Ceph Lifecycle Management | ||

| Hyper-converged Storage | ||

| ZFS Integration | ||

| Shared Storage Support | ||

| Local Storage Support | ||

| Snapshots | ||

| Live Migration | ||

| HA (node failure) | ||

| Software Defined Networking | ||

| VLAN / VXLAN | ||

| RBAC / Permissions | ||

| Containers | ||

| VM Templates | ||

| PCIe / GPU Passthrough | ||

| SR-IOV | ||

| USB Passthrough | ||

| Automation / IaC |

Backup and DR Options

XCP-ng treats backups as a disaster recovery workflow, with strong emphasis on automation, restore validation, and replication.

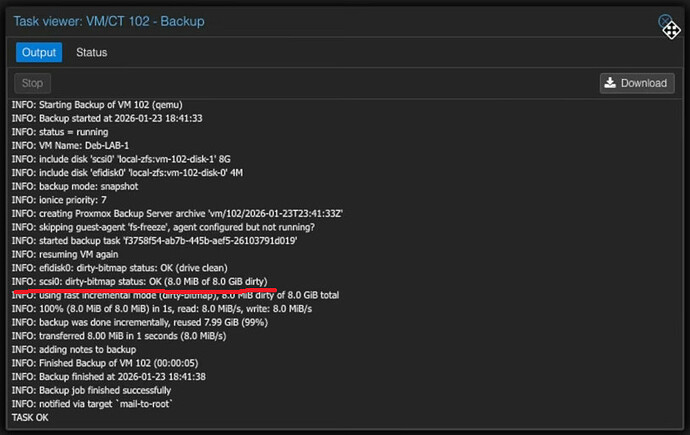

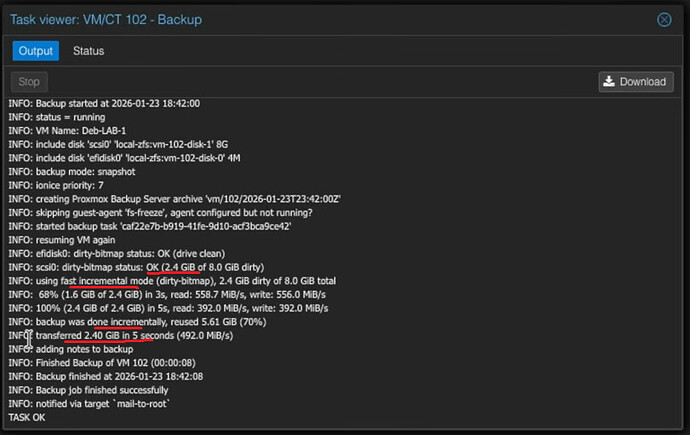

Proxmox treats backups as verifiable, deduplicated data objects, prioritizing integrity, efficiency, and isolation via Proxmox Backup Server.

| Backup / DR Feature | Proxmox VE | XCP-ng |

|---|---|---|

| Backup Management UI | ||

| Backup Target | ||

| Incremental Backups | ||

| Deduplication | ||

| Cross-VM Deduplication | ||

| Compression | ||

| Encryption | ||

| Automated Restore Testing | ||

| File-Level Restore | ||

| VM-Level Restore | ||

| Backup Scheduling | ||

| Replication of All Backups | ||

| Continuous Replication | ||

| Warm Standby VM |