Hi everyone,

I’m currently working on redesigning the IT infrastructure for a retail company with 8 stores + a Head Office (HQ). I’d love your insights on architecture, storage, and hypervisor choices based on what I have and what I aim to achieve.

CURRENT SITUATION

- The infrastructure is fully physical.

- HQ has 4x HPE DL380 Gen10 servers, each with:

- 2x Intel Xeon Gold 6138 (40 threads total)

- 64GB RAM (each – can be upgraded)

- RAID controller onboard (Smart Array)

- All servers came with 4x SAS HDDs (10K RPM 2.4TB – HPE 881507-001).

- We also have old Dell R610s at shops running lightweight apps.

- Users connect to servers via RDP sessions (Remote Desktop), even for very light workloads.

- This results in power waste, fragmented management, resource underutilization, and no virtualization.

GOALS

- Centralize all VM workloads at HQ (no more servers in stores).

- Reduce power consumption and physical complexity.

- Achieve cost-effective, stable, long-term infrastructure.

- Virtualize POS app servers, File Server, Finance (SAGE), AD/DNS, and some Linux tools (Wazuh, Zabbix, GLPI).

- Ensure safe backups and possibly minimal HA without overkill.

CONCERNS

- I considered Ceph, but I’m worried about:

- Complexity of setup and maintenance

- Need for 10GbE storage backend

- Higher resource usage and possible instability

- I also wonder if ZFS is reliable enough for production, especially with many Windows Server VMs.

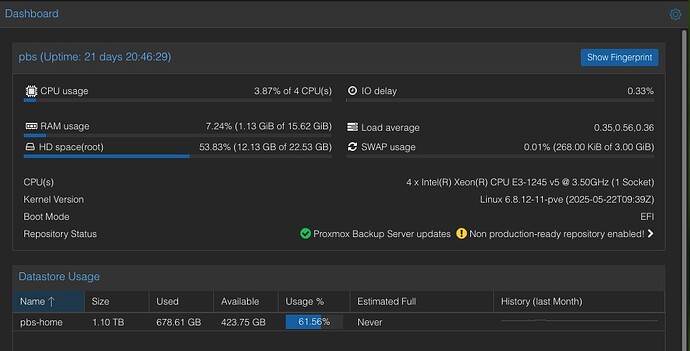

- Is it safe to run PBS in-cluster?

- Can I reuse the SAS 10K disks efficiently, or should I ditch them?

- Should I mix SATA/NVMe and SAS in this setup?

OPTIONS I’M CONSIDERING

Option 1: 3x Standalone Proxmox Hosts + 1 Backup Host

Option 1: 3x Standalone Proxmox Hosts + 1 Backup Host

- 3 standalone PVE nodes (each with ZFS local mirror for VMs)

- Node4 hosts PBS and TrueNAS (as VMs)

- Each node handles part of the VM load

- Simple setup, no clustering, easy maintenance

![]() Pros:

Pros:

- Simplicity, isolation

- Low overhead

- Clean backups

![]() Cons:

Cons:

- No HA

- No shared storage

- Manual failover

Option 2: 2x Hyper-V Hosts + 1 Proxmox for Tools

Option 2: 2x Hyper-V Hosts + 1 Proxmox for Tools

- 2 Hyper-V nodes for Windows VMs

- 1 Proxmox node for Linux tools (Wazuh, Zabbix, etc.)

- Use Windows-friendly backups (Veeam / Windows Server Backup)

![]() Pros:

Pros:

- Simpler for Windows

- Familiar to staff

- Easy RDS-style setup

![]() Cons:

Cons:

- Fragmented stack

- No advanced features (ZFS, PBS)

- Monitoring/tools on separate platform

Option 3: Full 4-Node Proxmox HA Cluster

Option 3: Full 4-Node Proxmox HA Cluster

- All 4 DL380s in a single Proxmox Cluster

- Each node runs ZFS (NVMe mirror) locally

- VMs distributed across nodes

- PBS and TrueNAS run as VMs in Node4

- HDD SAS passthrough via HBA to PBS / TrueNAS

![]() Pros:

Pros:

- Single pane of glass

- Native HA / quorum

- Optimal reuse of hardware

- PBS + NAS integrated

![]() Cons:

Cons:

- A bit more complex (quorum, fencing)

- PBS in-cluster (questionable redundancy)

- No shared storage, so only limited live migration unless manually handled

HARDWARE BEING CONSIDERED

HARDWARE BEING CONSIDERED

- Add 2x SSD SATA 960GB per node (RAID1 boot ext4 via Smart Array)

- Add 2x Micron 7450 Pro/Max 1.92TB U.2 per node (ZFS mirror)

- Add LSI 9300-8i HBA to handle SAS HDD passthrough

- Reuse 10K SAS drives (2.4TB x12) on Node4 for PBS / TrueNAS via ZFS RAIDZ2

- Use FortiGate 81F + Cisco C9200L 10Gb Core Switch

QUESTIONS

QUESTIONS

- Is Option 3 (Proxmox HA) a good long-term approach for this small/medium setup?

- Would Ceph really be worth the complexity in my case?

- Can I mix ZFS local pools per node with Proxmox HA cluster safely?

- Is it safe to run PBS in-cluster (Node4)?

- Would it be a mistake to reuse the SAS 10K drives via passthrough for PBS/NAS?

- For Windows workloads, is ZFS still the best choice or should I use ext4/RAID?

- Should I prefer ZFS boot or ext4 boot for these servers?

- Any advice on using Micron 7450 Pro vs Samsung PM893 vs HPE SSDs?

I’m seeking the most balanced, stable, and future-proof setup using the 4 DL380 Gen10 servers I already own. I want to make informed hardware and configuration choices (disk type, FS, layout, VM placement, PBS strategy…) and avoid the usual regrets (wasting SAS drives, under-using NVMe, no HA, etc.).

Any expert advice, real-world feedback, or example architectures would be tremendously appreciated.

Thanks in advance!