When setting up ZFS pools performance, capacity and data integrity all have to be balanced based on your needs and budget. It’s not an easy decision to make so I wanted to post some references here to help you make a more informed decision.

capacity

/\

/ \

/ \

/ \

performance /________\ integrity

These are the metrics to consider when deciding how to layout your drives in FreeNAS.

- Read I/O operations per second (IOPS)

- Write IOPS

- Streaming read speed

- Streaming write speed

- Storage space efficiency (usable capacity after parity versus total raw capacity)

- Fault tolerance (maximum number of drives that can fail before data loss)

Do you need more storage? more speed? more fault tolerance? How you lay them out will cause dramatic differences in in these numbers which is why deciding this is the first step in your plan.

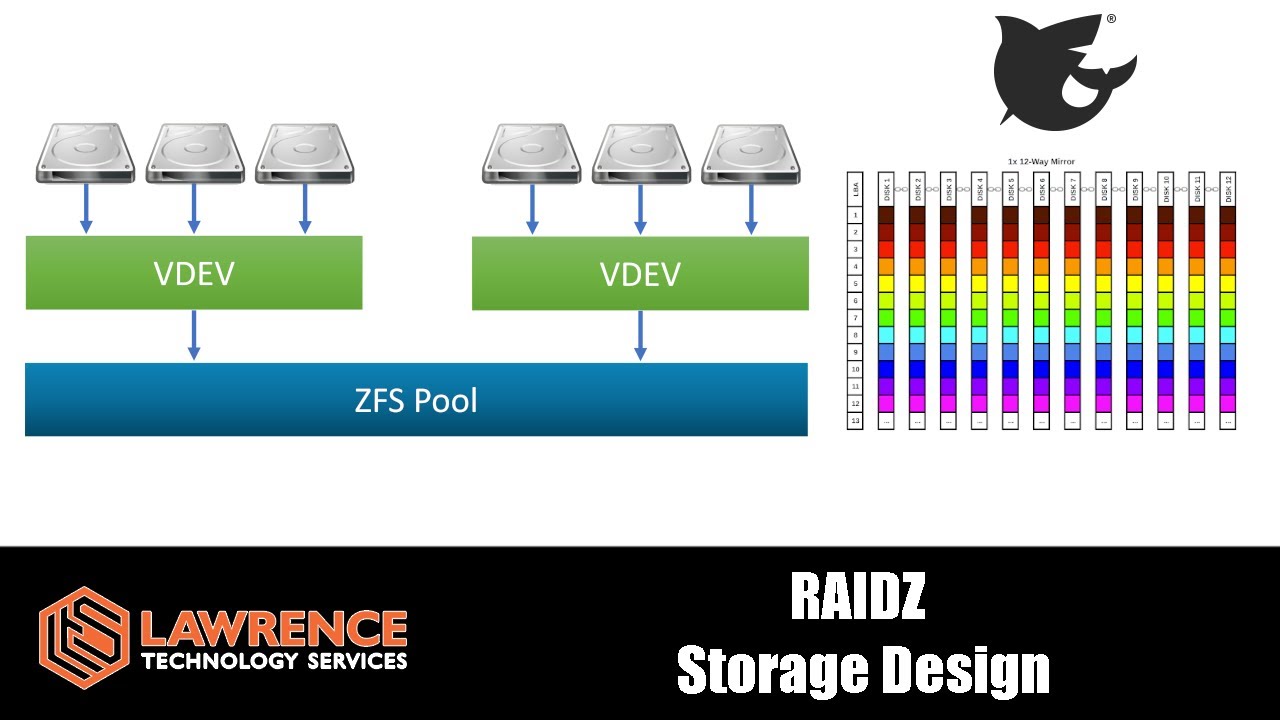

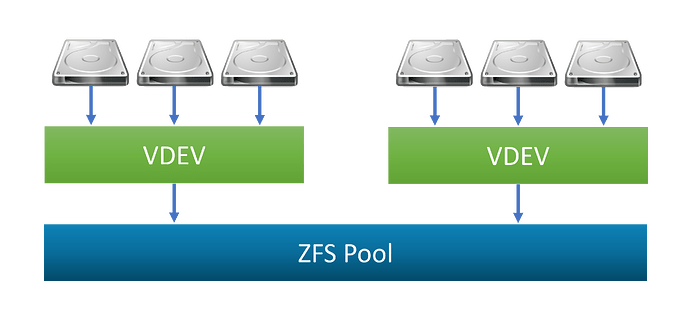

In ZFS, drives are logically grouped together into one or more vdevs. Each vdev can combine physical drives in a number of different RAIDZ configurations. If you have multiple vdevs, the pool data is striped across all the vdevs.

Here are the basics for calculating RAIDZ performance, the terms parity disks and data disks refer to the parity level (1 for Z1, 2 for Z2, and 3 for Z3; we’ll call the parity level p ) and vdev width (the number of disks in the vdev, which we’ll call N ) minus p . The effective storage space in a RAIDZ vdev is equal to the capacity of a single disk times the number of data disks in the vdev. If you’re using mismatched disk sizes, it’s the size of the smallest disk times the number of data disks. Fault tolerance per vdev is equal to the parity level of that vdev.

TL;DR: Choose a RAID-Z type based on your IOPS needs and the amount of space you are willing to devote to parity information. If you need more IOPS, use fewer disks per stripe. If you need more usable space, use more disks per stripe.

For those of you that want to dive deeper into this topic, here are some great write ups that really helped me understand this much better.

Six Metrics for Measuring ZFS Pool Performance Part 1

Six Metrics for Measuring ZFS Pool Performance Part 2

ZFS STORAGE POOL LAYOUT PDF

https://static.ixsystems.co/uploads/2018/10/ZFS_Storage_Pool_Layout_White_Paper_WEB.pdf

The ZFS ZIL and SLOG Demystified

ZFS RAIDZ stripe width, or: How I Learned to Stop Worrying and Love RAIDZ

ZFS Raidz Performance, Capacity and Integrity

https://calomel.org/zfs_raid_speed_capacity.html

ZFS Record Sizes for Different Workloads

https://jrs-s.net/2019/04/03/on-zfs-recordsize/

Excellent article about the cache vdev or L2ARC

Synchronous vs Asynchronous Writes and the ZIL

Choosing The Right ZFS Pool Layout

Nice article from ARS Technica comparing ZFS to the more traditional RAID6 & RAID10 setups